Gradient Boosting Notes and Pictures

On Friday June 16 Dr. Ravindran gave a talk on gradient boosting. The key points to remember are:

- Boosting is a technique to create strong classifiers out of weaker ones.

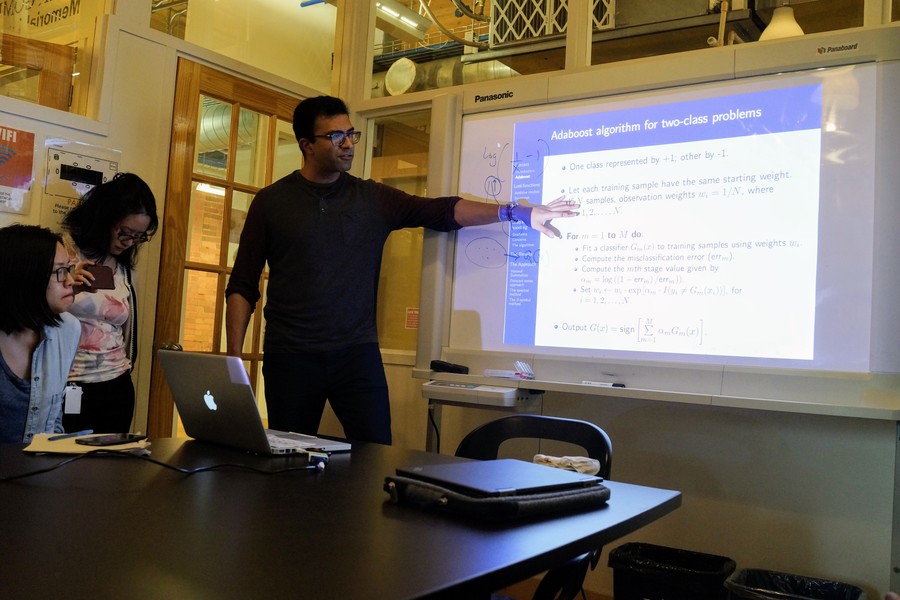

- The first boosting algorithm, designed in the 80’s?, is Adaboost. It consists on creating some weights for each classifier that contain enough info about their perfomarnce.

- Great out of the bag classifiers.

- You can find the slides from the talk here.

Pics from the talk: